Method

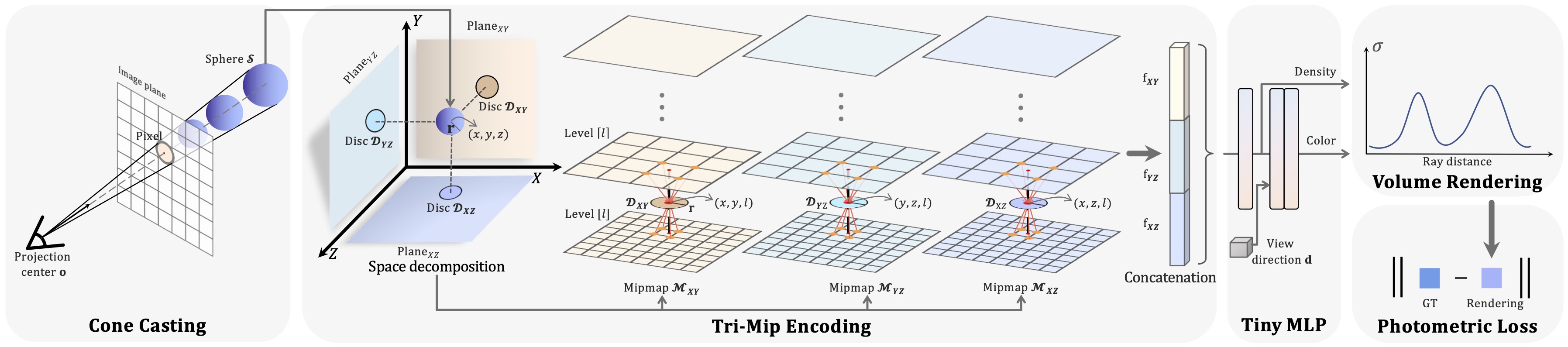

To render a pixel, we emit a cone from the camera’s projection center to the pixel on the image plane, and then we cast a set of spheres inside the cone. Next, the spheres are orthogonally projected on the three planes and featurized by our Tri-Mip encoding. After that the feature vector is fed into the tiny MLP to non-linearly map to density and color. Finally, the density and color of the spheres are integrated using volume rendering to produce final color for the pixel.

Results

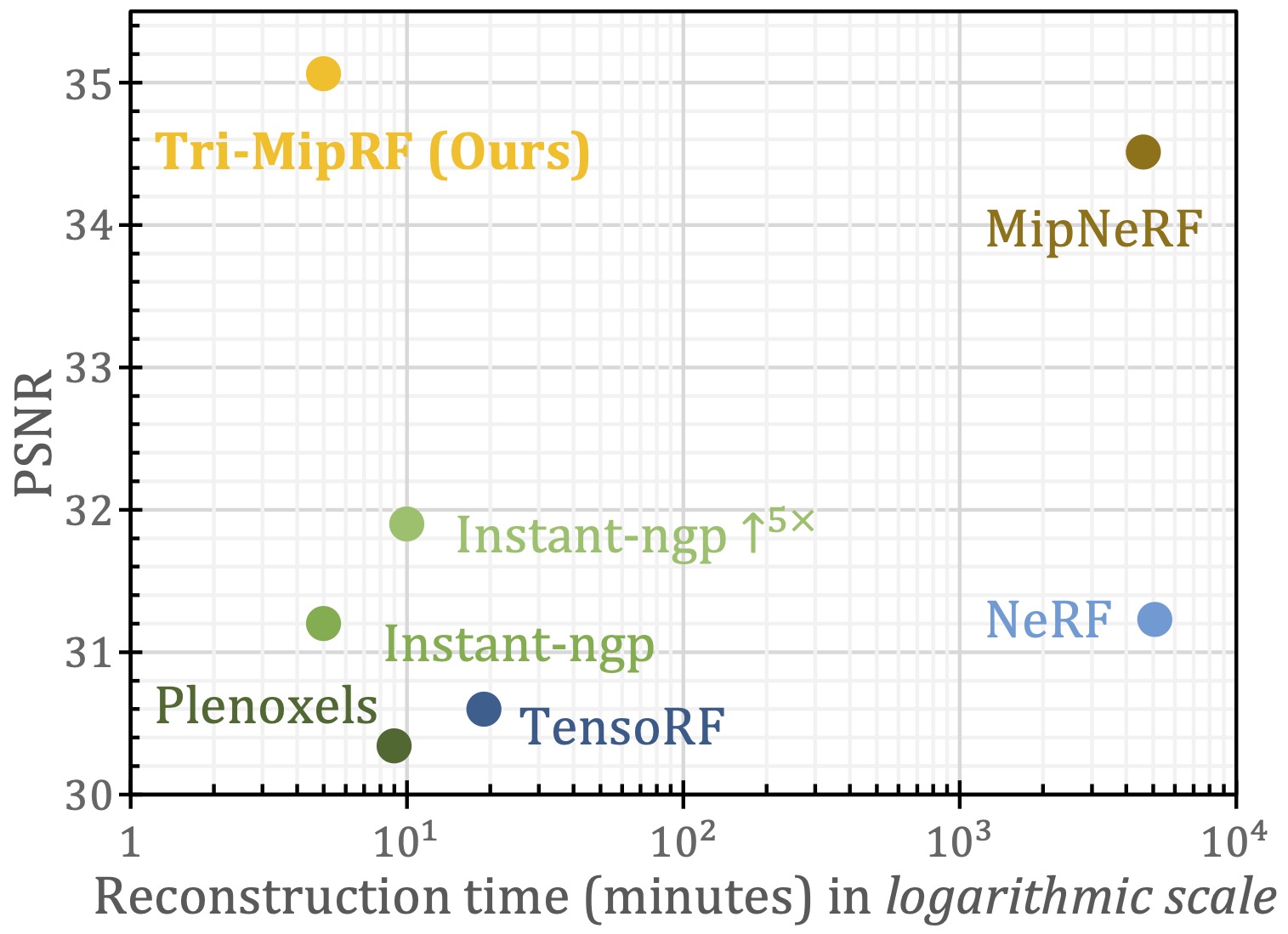

Quality vs. Reconstruction time

Our Tri-MipRF achieves state-of-the-art rendering quality while can be reconstructed efficiently, compared with cutting-edge radiance fields methods, e.g., NeRF, MipNeRF, Plenoxels, TensoRF, and Instant-ngp. Equipping Instant-ngp with super-sampling (named Instant-ngp↑5×) improves the rendering quality to a certain extent but significantly slows down the reconstruction.

In-the-wild Captures

To further demonstrate the applicability, we captured several objects in the wild, performed SFM on the sequence to estimate the camera’s intrinsic and extrinsic parameters, and applied our Tri-MipRF to reconstruct them. We show three example results here, where we can see the rendered novel views faithfully reproduce the detailed structures and appearances, and the PSNR/SSIM values also evidence the applicability of our method.

Related Links

Zip-NeRF introduces a multi-sampling-based method to address the same problem, efficient anti-aliasing, while our method belongs to the pre-filtering-based method.